AI has entered the classroom faster than any technology before it.

Teacher use of AI nearly doubled in recent studies, rising from 34% to 61% in a span of two years according to EdWeek Research Center data.

Tools that generate lesson plans, grade essays, and create quizzes have gone from experimental to everyday.

The fear of AI replacing teachers eventually turned to promises: AI will save time, reduce workload, personalize learning, and make teaching more sustainable.

But another is also true.

A February 2026 report from Education Perfect and the Angus Reid Group found that 77% of teachers report stress from keeping pace with new AI tools because the speed of change is overwhelming.

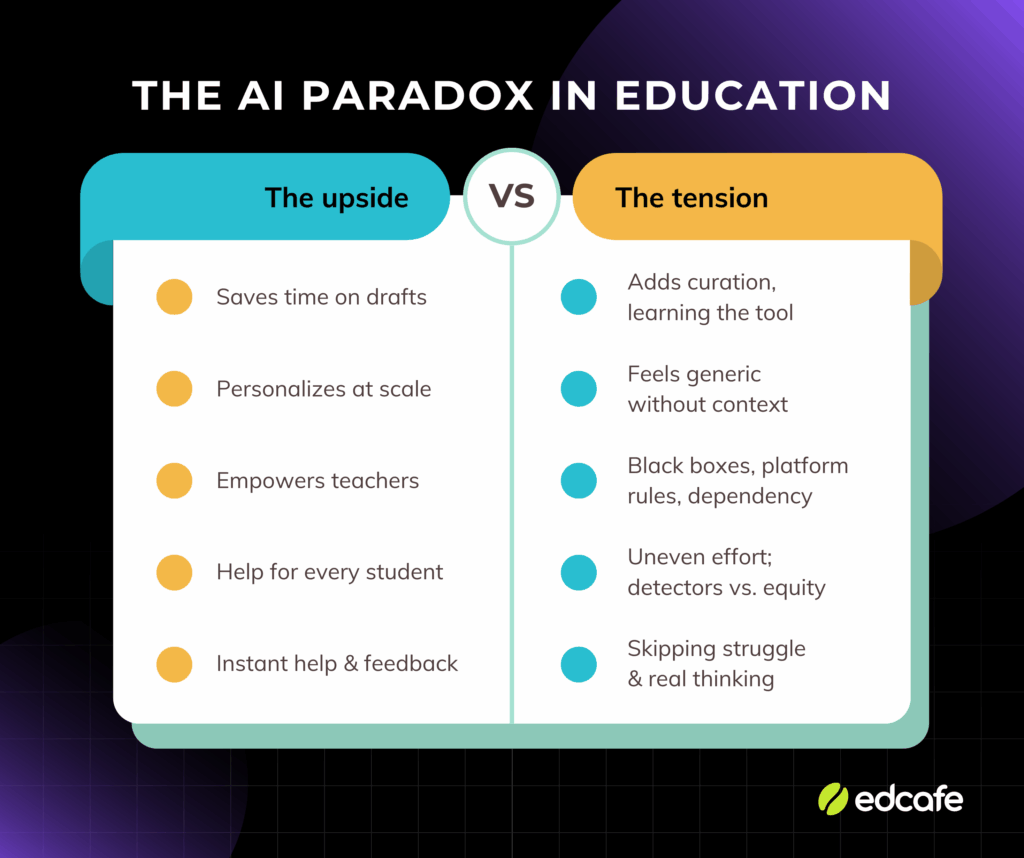

That right there is an AI paradox.

AI brings real benefits, and real tensions. The same tool that saves you time can make you feel busier. The feature that personalizes learning can feel generic. The technology that empowers teachers can leave them feeling less in control.

This article explores five angles of the AI paradox educators are facing right now, and how to navigate them without abandoning the tools or ignoring the problems.oblems.

Expectations vs Reality

To understand the big AI paradox in education, you need to see the gap between what AI was supposed to do and what teachers are actually experiencing.

What AI was supposed to solve for teachers

The pitch was simple: AI would give teachers time back.

Teachers work an average of 50-55 hours per week, according to the Kuksha Global Benchmark Report surveying 4,000 educators across 50+ countries. Here’s where that time goes:

| Task | Hours per week | % of total time |

|---|---|---|

| Classroom instruction | 12 hours | 24% |

| Lesson preparation | 9.5 hours | 19% |

| Marking and evaluation | 8.2 hours | 16% |

| Administrative work | 5.5 hours | 11% |

Nearly 70% of teachers from the same pool report that admin work impacts instructional quality.

AI promised to handle the repetitive parts:

- Generating differentiated materials in seconds instead of hours

- Drafting personalized feedback for 30 students at once

- Creating quizzes from lesson content automatically

- Writing parent emails and summarizing meeting notes

It also promised personalization at scale.

A recent systematic review published in Smart Learning Environments found that intelligent tutoring systems can tailor instruction to individual student needs, adjusting content difficulty and pacing in real time.

This is something most teachers can’t do alone with 25-30 students.

The vision: teachers spend less time on prep and admin, more time on relationships and instruction. Students get work that meets them where they are.

Why teachers are feeling the tension

Adoption is happening fast. But so is the stress.

The same Education Perfect report (cited above) found that 77% of teachers feel overwhelmed by the pace of new tools.

And the support gap is real:

- Only 35% of teachers believe their district is adapting quickly enough to provide curriculum-aligned AI tools

- 65% say their district struggles to provide ethical frameworks for AI use

- Teachers are left to figure out which tools are safe, effective, and worth the learning curve

That’s the tension: teachers are expected to adopt AI to save time, but the adoption process itself is adding work.

The promise was efficiency. The reality is a new kind of workload, and that’s the AI paradox in those numbers: adoption without relief.

1. If AI saves time, why do teachers feel busier?

AI generates a lesson plan in seconds. Then you spend the next stretch of time editing it.

That’s the AI paradox here: time saved on generation, time spent on curation.

A study from Stanford’s SCALE Initiative examining 1,043 teachers found that AI lesson-planning tools reduced planning time but created new curation work: reviewing content for accuracy, cultural relevance, and alignment with teaching context.

Why this happens:

- AI doesn’t know your classroom. It generates generic content. You add the context, the examples students will recognize, the language that matches how you teach.

- Quality varies wildly. One output is nearly perfect. The next has factual errors or awkward phrasing. You can’t trust it without checking.

- The learning curve costs time up front. Figuring out how to prompt well, which tools work for which tasks, and when to just start from scratch. That’s invisible work that doesn’t show up in “time saved” claims.

How to navigate it:

The key is working from materials you already have instead of starting from a generic prompt.

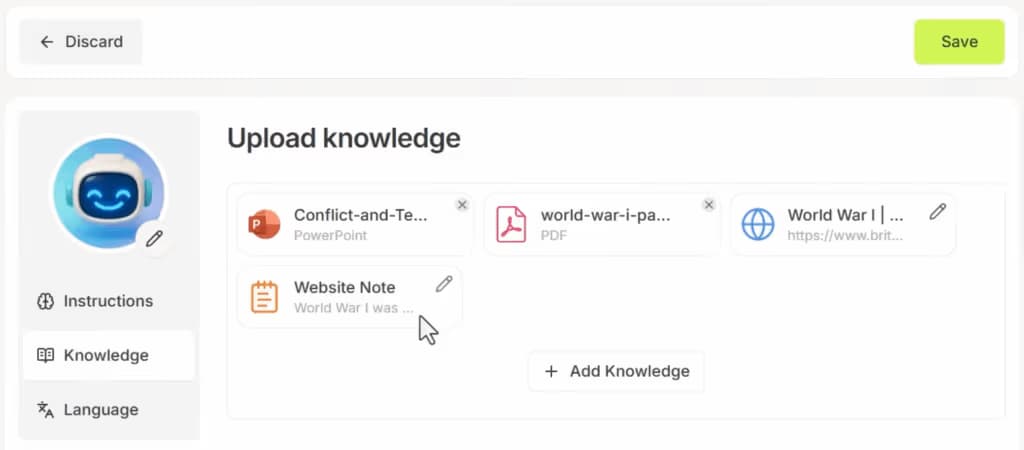

For example, Edcafe AI lets you generate classroom-ready materials from multiple input sources:

- paste your lesson notes,

- upload a PDF or slide deck,

- drop in a YouTube URL,

- or combine several documents at once.

The AI then builds from whatever existing resource you have on hand.

The same flexibility applies whether you’re creating differentiated reading passages or building the knowledge base for a student-facing chatbot.

Feed it your materials, then the output aligns with what you actually have.

That’s intentional AI tool use: you control the input, so you spend less time fixing the output.

2. If AI personalizes learning, why does everything feel generic?

AI can adapt content to different learning levels. It can generate three versions of the same lesson in seconds.

But the output can feel flat when not controlled.

AI personalizes at scale, but it lacks nuance on its own.

While it optimizes for patterns in training data, it doesn’t know that your class has been talking about climate change all month, or that three students are obsessed with basketball, or that you always use a particular analogy to explain fractions.

True personalization requires context AI doesn’t have.

Why this happens:

- AI differentiates by level, not by learner. It can simplify vocabulary or add scaffolding. But it can’t tailor content to individual interests, prior knowledge, or cultural background without you telling it to.

- The output reflects average teaching, not your teaching. AI is trained on millions of examples. The result is competent but can be generic.

- Personalization takes input. If you feed AI a generic prompt, you get generic output. The more specific your instructions, the less generic the result.

How to navigate it:

Use AI to generate the scaffold. Then layer in the personal touches yourself.

In Edcafe AI, the Additional Instructions field lets you shape the output before it generates.

Specify student level, align to standards, adjust difficulty, or add context about what your class has been studying. The more specific you are, the less editing you’ll do after.

After generation, you can customize further:

- Add personal notes to learning material instructions: include links, images, or voice recordings that connect to your lesson

- Edit quiz question explanations to match your teaching language

- Add images to questions to make them more concrete or relevant

- Control assignment feedback that updates in real time on the student side

The AI gives you the structure. You add the context that makes it truly yours.

3. If AI empowers teachers, why does it feel like we’re losing control?

AI tools promise to give teachers more flexibility.

But they also introduce new dependencies.

A multiyear study from Monash University with 76 middle school teachers found that meaningful control over AI systems requires teachers to understand and influence AI decisions.

When teachers can’t see why AI made certain choices, trust breaks down.

Why this happens:

- Black-box grading removes teacher judgment. When AI auto-grades short answers or essays, you don’t see the reasoning. You see a score. If a student challenges it, you’re defending a decision you didn’t make.

- Platform decisions shape pedagogy. The tool decides what question types are available, what data you can see, how feedback is delivered. Your teaching adapts to fit the tool instead of the other way around.

- Dependence creates risk. If the tool changes its pricing, removes a feature, or shuts down, you’re left rebuilding workflows from scratch.

How to navigate it:

Choose tools that keep you in the loop.

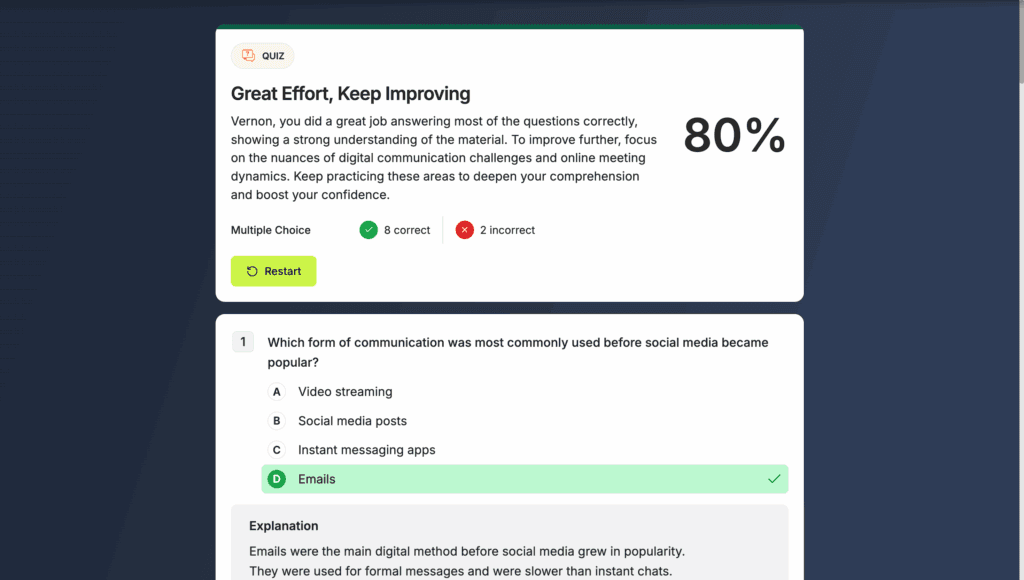

In Edcafe AI, grading and feedback work with teacher oversight, not around it:

- Multiple-choice questions auto-grade instantly. Students see whether they’re correct. You see response breakdowns: which answer each student chose, how many picked each option, and where they struggled.

- Short answer questions get AI-generated feedback. Students receive personalized responses based on what they wrote.

- Real-time analytics show patterns as they happen. Who submitted. Who’s struggling. Which questions tripped up the most. You see the data real-time without waiting for a report.

- Export when you need records. Download responses as CSV or Excel for your gradebook or evidence of progress.

The key is visibility. You should always know what the AI is doing, see the results before students do, and have the ability to step in when needed.

4. If AI helps students succeed, why does it feel unfair?

AI can explain concepts students don’t understand.

The promise is support: every student gets help when they need it, not just when the teacher is available.

But the tension is while some students use AI to learn, others use it to avoid learning entirely.

There’s a gap in effort.

Between May and December 2025, the percentage of students using AI for homework jumped from 48% to 62%. Some are using it to brainstorm or get better explanations. Others are using it to generate finished work they submit without changes.

AI detection tools don’t solve this.

They were supposed to level the playing field by catching students who use AI inappropriately. Instead, they’ve created new problems.

AI detectors flag non-native speakers at higher rates than native speakers, even when the work is entirely human-authored.

That right there is a new form of bias.

For a deeper look at why AI detection tools are failing classrooms, see our article on why it’s time to say goodbye to AI detection tools.

How to navigate it:

- Set clear expectations about AI use. Tell students when AI is allowed, how it should be used, and what needs to be their own thinking. Vague rules create confusion and inconsistent enforcement.

- Design assignments that show thinking, not just answers. Drafts, reflections, in-class checkpoints, process documentation. These reveal learning in ways a final product can’t.

- Avoid AI detection tools as evidence. Probability scores are not proof. If you suspect a student bypassed learning, have a conversation. Ask them to explain their thinking. That tells you more than any detection score.

- Teach students how to use AI as a learning tool. Show them the difference between using AI to understand a concept and using it to avoid the work. Model responsible use in class.

One thing to note: fairness isn’t about banning AI.

It’s about creating conditions where students who do the work aren’t disadvantaged by students who don’t, and where the tools we use to enforce that don’t create new inequities.

5. If AI helps students learn, why are we worried about thinking skills?

AI can provide instant feedback.

But there’s a risk.

Students may become dependent. They skip the struggle. They optimize for task completion instead of understanding.

Learning often requires barriers.

AI lowers the barrier to getting answers. But productive struggle is essential for building lasting skills and deep understanding. When AI does the thinking for students, those skills don’t develop.

The difference is how it’s used.

A student asks AI to explain a concept, then tries the problem again. That’s learning.

A student asks AI for the answer, copies it, and moves on. That’s task completion.

Both look like “using AI.” But only one builds understanding.

How to navigate it:

The key is giving students AI support that scaffolds thinking instead of replacing it.

Edcafe AI’s chatbot feature lets you create student-facing chatbots constrained to your rules. Upload your lesson materials as the knowledge base: your slides, notes, readings, etc. Then set instructions for how the chatbot should behave:

- Ask guiding questions instead of giving direct answers

- Only refer to information inside the uploaded documents

- Prompt students to try solving first before offering help

- Provide hints, not solutions

You control what the chatbot knows and how it responds. Students get 24/7 support, but within boundaries that preserve the learning process.

For example, instead of a chatbot that solves math problems, you create one that asks “What strategy did you try?” or “What part of the problem is confusing?”

AI can help students learn. But it can also prevent them from learning how to learn.

The difference is whether the tool extends their thinking or does it for them. And with the right constraints, you can make sure it’s the former.

Navigating the AI Paradox

The AI paradoxes aren’t going away.

That’s the nature of any tool powerful enough to change how work gets done.

This pattern isn’t new.

The printing press made books accessible but threatened scribes.

Calculators made computation instant but sparked debates about mental math.

The internet made information abundant but raised questions about depth.

AI is no different. Every tool that increases capability also introduces tension.

The question isn’t whether to use AI. It’s how.

Teachers who succeed with AI are the ones who use it strategically.

They know which tasks benefit from speed and which require their judgment.

They use AI to handle high-volume, low-stakes work so they can focus on relationships, nuance, and the decisions that actually shape learning.

Don’t wait for the AI paradox to resolve. Instead, start navigating them:

- Acknowledge that the tool saving you time is also adding work, and decide whether the tradeoff is worth it

- Accept that AI-generated content will feel generic unless you add the context that makes it yours

- Choose tools like Edcafe AI that keep you in control instead of automating you out of the process

- Teach students how to use AI responsibly instead of relying on flawed detection

- Constrain AI support so it extends thinking instead of replacing it

AI is a tool, not a solution!

The pedagogy, the relationships, the judgment; that’s still you.

The AI paradox exists because AI is powerful enough to help and disrupt at the same time.

The teachers who navigate them best are the ones who stay critical, stay intentional, and remember that technology serves learning, not the other way around.

FAQs

What is the AI paradox in education?

The AI paradox in education is that the same technology that can save time and personalize learning can also add workload, stress, and new fairness questions, often at the same time.

Is AI actually saving teachers time?

It depends. AI saves time on creation but adds time for review and customization. The net benefit varies by task and teacher comfort level. Use AI for high-volume, low-stakes tasks where speed matters more than perfection. For high-stakes content that requires nuance, manual creation is often faster.

Should I be worried about students becoming too dependent on AI?

Yes, but dependence isn’t inevitable. Students who use AI to understand concepts and then try problems themselves are learning. Students who copy answers without thinking aren’t. The difference is how you frame AI use and what tasks you assign. Design work that shows thinking, not just completion, and teach students when AI helps vs. when it gets in the way.

How do I know if an AI tool is worth the learning curve?

Start with one feature that addresses your biggest pain point. If it saves more time than it costs within two weeks, keep going. If you’re spending more time fixing AI output than the task would take manually, try a different tool or approach. For example, Edcafe AI lets you start with quiz generation or lesson planning using materials you already have. Test one feature, see if it fits your workflow, then expand from there. Set a time cap and be willing to walk away if it’s not working.

Do I need to use AI to be a good teacher?

No. AI is a tool, not a requirement. Good teaching is about relationships, pedagogy, and judgment. AI can support that, but it can’t replace it. If AI doesn’t fit your workflow or teaching style, you’re not falling behind. Use what works for you and your students.

What’s the biggest mistake teachers make with AI?

Using it for everything. AI works best for specific, high-volume tasks, not as a replacement for teacher judgment. The mistake is treating AI as a solution instead of a tool. Keep control over high-stakes decisions, preserve tasks where struggle builds skills, and stay critical of outputs.

Should I use AI detection tools to catch students using AI?

No. AI detection tools produce unreliable results and disproportionately flag non-native speakers and strong writers. Probability scores are not proof. If you’re concerned about AI misuse, design assignments that show thinking through drafts, reflections, or in-class checkpoints, and have conversations with students instead of relying on detection scores.