AI can generate you a quiz in minutes, seconds even.

But speed doesn’t equal quality.

This shouldn’t be news but a bad quiz is still a bad quiz even when it’s generated fast.

Research from the National Council on Measurement in Education found that 34% of AI-generated assessment items contained at least one quality issue that would affect student scores if deployed without review.

That’s just one of the many AI paradoxes in education. AI saves time on creation, but adds time for review. The tool that promises to lighten your workload can make you feel busier.

So the question here becomes less whether AI can create quizzes faster, but more on whether those quizzes actually work.

But that’s exactly what this guide is for. Here, you’ll find how to create effective quizzes using AI.

You’ll learn:

- How AI quiz making works — and what you still control as the teacher

- Your role in AI quiz making — alignment, formative vs. summative use, and learning objectives

- How to design an effective AI quiz — from building with the right content to acting on student data

- Best practices for AI-generated quizzes — prompting strategies, review workflows, and balancing automation with oversight

- What to consider when using AI for quizzes — privacy, data, and teacher responsibility

- AI quiz tools to get started with — how and where to get started with AI quiz tools.

How AI Quiz Making Works

Whether you’re new to AI quiz tools or already using them, this guide will help you design better assessments with less effort.

Let’s start with what AI quiz making actually is.

What AI Quiz Making Is

AI quiz making is using artificial intelligence to generate quiz questions from content you provide.

Most people know the first way: going to a chatbot like ChatGPT and prompting it. “Create 10 multiple-choice questions on photosynthesis for Grade 7.” That’s using large language models (LLMs) like GPT-4, Claude, or Gemini directly.

But there’s another way: dedicated quiz generators built specifically for teachers. These tools use AI under the hood but wrap it in a workflow designed for classroom use.

Here’s how each works:

General-purpose language models (ChatGPT, Claude, Gemini) generate quiz questions when you prompt them.

You paste your content, describe what you want, and the AI produces a draft.

Research published in arXiv comparing GPT-3.5 and GPT-4 for quiz generation found that GPT-4 demonstrates superior ability to generate precise, challenging questions.

But creating high-quality distractor options (wrong answers that reveal misconceptions) remains challenging for both.

These work well for quick, one-off quizzes but require manual formatting and don’t handle delivery or grading.

Dedicated quiz generators (Edcafe AI, Quizgecko, Conker) are built specifically for quiz creation.

They accept multiple input sources (documents, videos, URLs), generate questions with one click, and handle the full workflow: creation → customization → delivery → grading → analytics.

Both use AI. The difference is whether you’re prompting a general tool or using one designed for the full quiz workflow.

| Traditional Quiz Creation | AI Quiz Creation | |

|---|---|---|

| Primary work | Writing questions from scratch | Reviewing and refining a generated draft |

| Time to first draft | 30-60 minutes | 5-15 minutes |

| Main skill required | Question writing and distractor crafting | Evaluation and alignment checking |

| Workflow | Creation-heavy | Curation-focused |

With traditional creation, most work happens upfront: writing, crafting, formatting.

With AI, the work moves to the back end: reviewing, refining, and ensuring alignment with what you actually taught.

Learn how to create interactive quizzes with AI from topic, document, or YouTube.

When to Use AI for Quizzes

AI quiz making isn’t equally useful in every situation.

It works best at specific moments in your teaching workflow.

Research from Stanford’s SCALE Initiative examining 1,043 teachers found that AI tools reduced planning time but required teachers to curate content for accuracy, cultural relevance, and alignment with their teaching context.

The same pattern applies to quizzes.

AI helps most when:

- You have concrete source materials — documents, videos, or webpages you’ve already used in class that AI can turn into questions

- You need a usable draft quickly — and you can steer the output upfront with specific instructions about difficulty, question types, or learning objectives

- You need flexibility to iterate — generating a draft, refining weak questions, and adjusting before committing

- Delivery speed matters — getting quizzes to students via link, QR code, or LMS without manual export steps

- You need instant feedback — auto-grading and real-time dashboards that show you what to reteach while it still matters

AI is less useful for high-stakes summative assessments, questions testing exact wording, first-time teaching when you’re still learning student misconceptions, or situations where your existing quiz already works.

For a complete breakdown of when to use vs. skip AI for quizzes, including gray-area situations, a decision checklist, and specific examples, see our dedicated guide.

Your Role in AI Quiz Making

AI generates the questions. But you control everything that matters.

A good quiz should be centered on whether it’s able to reveal understanding, surface misconceptions, and drive what you teach next.

AI handles execution. But, you should still own the design decisions that make a quiz effective.

Use AI Quizzes as a Learning Tool

Quizzes are learning tools that strengthen memory and guide instruction.

Research by the National Science Foundation on the testing effect shows that students who practiced retrieval through quizzes demonstrated 50% better long-term retention compared to students who used elaborative studying methods like concept mapping.

AI quizzes work best at these learning touchpoints:

- Start of class — Activate prior knowledge before introducing new material

- Mid-lesson — Check comprehension before moving forward (hinge questions)

- End of class — Reinforce learning and highlight gaps (exit tickets)

- Between units — Review core concepts before building on them

- Before exams — Low-stakes practice that reduces anxiety while building confidence

The key is frequency and low stakes.

When quizzes feel like practice instead of judgment, students engage more honestly with what they don’t understand.

Then, use quiz data to adapt your instruction. Quiz results tell you:

- Who’s ready to move on — and who needs more time with the concept

- Which questions tripped up most students — revealing where your explanation didn’t land

- What misconceptions are common — so you can address them directly instead of assuming students “got it”

The quiz is supposed to be the beginning of what you do next.

For more on using formative assessments to adapt your teaching with AI, see our dedicated guide.

Design AI Quizzes Based on Learning Goals

Not all quizzes serve the same purpose. The way you design and deliver a quiz should match how you plan to use it.

The first decision: formative or summative?

Formative quizzes monitor learning while instruction is ongoing.

They’re low-stakes, often ungraded, and designed to give you and your students feedback you can act on immediately.

Think: hinge questions, exit tickets, practice sets.

Summative quizzes evaluate learning at the end of a unit or course.

They’re typically graded, higher-stakes, and used to measure mastery against a standard.

Think: unit tests, final exams, benchmark assessments.

The difference isn’t just timing. It’s what you do with the results.

Formative quiz results inform your next lesson (reteach, regroup, or move on). Summative quiz results assign grades and report progress.

How this changes quiz design:

| Formative Quiz Design | Summative Quiz Design | |

|---|---|---|

| Feedback timing | Instant, after each question | Only at the end |

| Stakes | Low, often ungraded | High, graded |

| Student experience | Safe to make mistakes | Performance matters |

| Review rigor | Quick check for clarity | Careful review for precision and fairness |

| Alignment scope | Matches recent lessons | Covers full unit scope |

The same AI-generated questions can serve both purposes.

But delivery mode and review rigor should match the stakes.

For 8 high-impact formative assessment examples you can create with AI, including vocabulary cards, exit tickets, and quick writes, see our dedicated guide.

Align AI Quizzes to Learning Objectives

A quiz can have perfectly written questions and still fail if it’s testing the wrong things.

Alignment means your quiz measures what you actually taught and what students are expected to learn.

Why this control matters:

Misaligned quizzes waste time. Students study one thing, get tested on another, and neither you nor they get useful feedback.

When quizzes match objectives, students know what matters and where to direct their effort. Results become actionable.

If a quiz is aligned, poor performance tells you exactly what to reteach.

How to ensure alignment:

Before you assign any quiz, whether AI-generated or manual, ask:

- Does each question connect to a specific learning objective from this unit?

- Are you testing what you taught, or what the source material happened to include?

- Do question types match the cognitive level you’re assessing? (Recall vs. application vs. analysis)

With AI quiz generators, alignment doesn’t happen automatically.

The tool generates from your source material, but it doesn’t know your instructional sequence, what you emphasized in class, or which skills matter most for your students.

That’s why review matters.

Before you assign, check whether each question tests what you actually taught, not just what the AI found in the source.

Quizzes are just one format in a larger ecosystem of educational content. Different formats serve different purposes: quizzes check understanding, flashcards build recall, reading passages develop comprehension, and projects foster creativity.

For more on educational content formats that work best, see our dedicated guide.

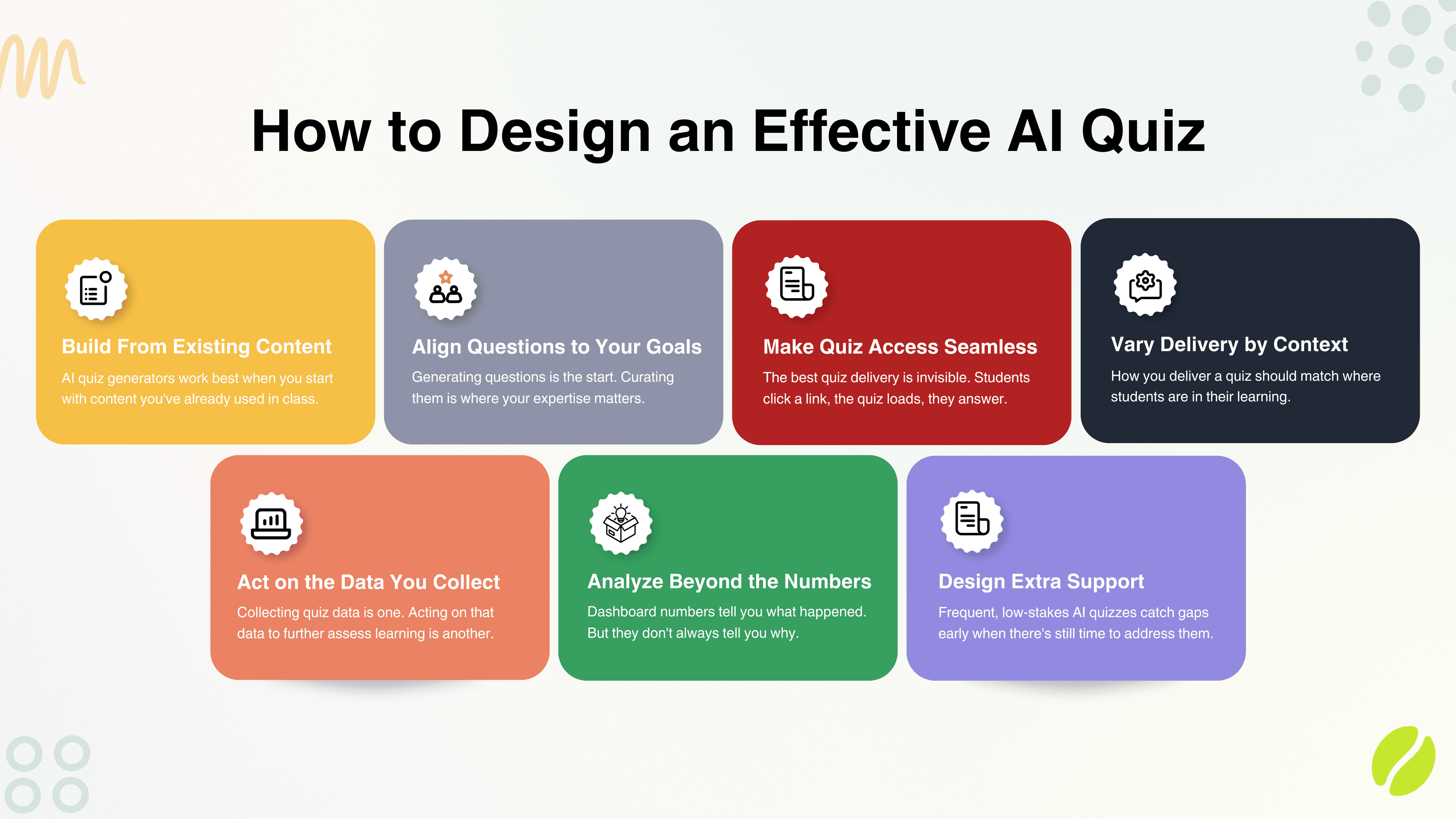

How to Design an Effective AI Quiz

You know your role. Now here’s how to put it into practice.

The principles matter. But they only work when you apply them to the decisions you make every time you create a quiz.

These practices turn “I control the design” into concrete actions.

1. Build From Existing Content

AI quiz generators work best when you start with content you’ve already used in class.

Not generic internet searches. Not random Wikipedia articles.

The content you actually taught from.

Here’s why: the quality of your quiz depends entirely on the quality of your source material.

If you feed AI a textbook chapter you never covered, it’ll generate questions on content your students never learned. If you upload slides from someone else’s lesson, the quiz won’t reflect your emphasis or teaching style.

Start with materials your students have already seen:

- Lesson slides or notes — What you presented in class becomes the quiz content

- Assigned readings — Chapter excerpts, articles, or passages students were supposed to read

- Video lessons — Whether you recorded or assigned them, if students watched it, it’s fair game

- Class handouts or worksheets — Study guides, practice problems, or concept maps used during instruction

The tighter the connection between source material and what students experienced, the more aligned your quiz will be.

In Edcafe AI, you can create quizzes from multiple input sources including topic, text, webpage, and documents.

What makes source material effective:

The best sources are focused, and directly tied to learning objectives.

A 30-page textbook chapter generates too many possible questions. A 3-page excerpt on a specific concept gives you a focused quiz.

A full-length documentary creates scatter. A 10-minute clip you paused and discussed in class creates precision.

When you control the input, you control the output.

Even YouTube videos can be a source material when creating AI quizzes. Here’s a quick guide showing how to create quizzes out of YouTube videos.

2. Align Questions to Your Goals

Generating questions is the start. Curating them is where your expertise matters.

AI doesn’t know which concepts you emphasized, which misconceptions your students struggle with, or which cognitive skills you’re actually assessing.

That’s your job.

In Edcafe AI, you get to shape your AI quiz with question types and additional instructions so you can ensure that the questions align with the quality you’re going after.

Question types should match your goal:

Not all questions serve the same purpose.

- Multiple choice — Fast, scalable, good for recall and concept recognition

- True/false — Quick comprehension checks, useful for identifying misconceptions

- Short answer — Requires retrieval and phrasing in students’ own words

- Fill-in-the-blank — Tests recall of specific terms or facts

Choose based on what you’re trying to measure, not what’s easiest to generate.

Review every question before you assign it:

Go through the generated quiz and ask:

✅ Does this question test what I taught? — Not what the source material mentioned in passing, but what you actually covered in class.

✅ Is this the right difficulty level? — Too easy wastes time. Too hard creates frustration and bad data.

✅ Does this match the cognitive skill I’m assessing? — Recall questions can’t measure application. Analysis questions shouldn’t just test memorization.

✅ Are the answer choices clear and distinct? — Vague options or two “correct” answers undermine the question.

If a question doesn’t pass these checks, either you edit it or delete it altogether.

You’re not locked into the first draft:

Good AI quiz tools let you edit questions, rewrite answer choices, adjust point values, and reorder items.

When using Edcafe AI, customization options are available after generation. Add images, regenerate distractors and correct answer explanations, and more.

Use that control. The AI gives you a draft. You decide what students see.

3. Make Access Seamless for Students

A well-designed quiz doesn’t matter if students can’t take it.

Friction kills completion. Complicated login processes, clunky platforms, or unclear instructions mean students give up before they start.

Reduce barriers to access:

The best quiz delivery is invisible. Students click a link, the quiz loads, they answer questions.

No account creation. No password resets. No “which platform is this on again?”

- Direct links — Send via email, LMS, or messaging. One click, they’re in.

- QR codes — Project on screen or print on handouts. Students scan and start.

- LMS integration — If your school uses Canvas, Google Classroom, or Schoology, embed the quiz directly where students already work.

The fewer steps between “here’s the quiz” and “student completes it,” the better your completion rates.

Good AI quiz tools have direct assignment workflows built right in, much like with Edcafe AI where you can share your quiz directly to students via link or QR with direct integrations to Google Classroom, Microsoft Teams, and LMS platforms.

Make it work on any device:

Students take quizzes on phones, tablets, and laptops.

If your quiz only works on desktop, you’ve just excluded everyone who left their laptop in their locker or prefers their phone.

Mobile-responsive design isn’t optional anymore.

Timing and availability matter:

Students need to know when the quiz is available and how long they have to complete it.

Set clear windows: “This quiz opens Monday at 8 AM and closes Wednesday at 11:59 PM.”

And decide upfront: is this timed (students must finish in 10 minutes once they start) or untimed (students have the full window to complete it at their own pace)?

Clarity here prevents confusion and complaints later.

There are many ways students can access your AI quizzes. For one, here’s how to make an AI quiz and export it straight to Google Forms.

4. Vary Delivery by Context

How you deliver a quiz should match where students are in their learning, and what you need to know.

A warm-up check at the start of class is different from a unit review. An exit ticket is different from a practice test.

The same AI-generated question set can serve all of these, but delivery mode changes everything.

Match format to moment:

- Live, synchronous delivery — Best for in-class moments where you want immediate visibility. All students answer at the same time and you see results on the spot.

- Asynchronous, self-paced — Best for homework, review, or make-up work. Students complete on their own schedule within a set window.

- Timed — Adds urgency and mimics test conditions. Use when you want students to retrieve without overthinking.

- Untimed — Reduces anxiety and measures understanding more accurately. Use when the goal is reflection, not speed.

This also includes deciding what type of assessment you want to achieve with your AI quiz. When using Edcafe AI, you can jump in between different quiz modes to fit your instructional needs.

Class size and subject matter also affect the right approach:

With smaller groups, you can pause and discuss wrong answers in real time.

With larger groups, you need the data to do that work for you, surfacing patterns before you lose the teaching moment.

Delivery shapes the experience.

Students who know a quiz is low-stakes, untimed, and for their benefit engage differently than students who think everything counts.

Be clear about purpose before they start.

“This is just to check in. It won’t affect your grade.” alone changes how honestly students respond.

5. Act on the Data You Collect

Collecting quiz data and acting on it are two different things.

Research from the University of Alberta published in the Journal of Computer Assisted Learning found that simply increasing the frequency of formative assessments doesn’t consistently improve student performance.

What matters is the conditions under which that data gets used.

In other words: more quizzes don’t help if nothing changes because of them.

What to look for in the data:

- High error rates on specific questions — Didn’t land in class. Needs a reteach.

- High error rates across most questions — The whole concept needs revisiting, not just one question.

- Wide spread in scores — Some students are ready to move on; others aren’t. Time to differentiate.

- Consistently correct answers — You can move forward. Don’t slow the whole class for what everyone already knows.

When you use Edcafe AI to create quizzes, quiz flow extends to data & analytics. You get actionable, real-time insights from student submissions so you can further assess learning.

The window for acting is short:

Quiz data is most useful immediately after students take it while the content is still fresh, instruction is still ongoing, and there’s time to adjust.

Waiting a week to review results and then moving on anyway wastes what the quiz told you.

With good AI quiz tools, results are available the moment students submit. Use that window.

What acting on data looks like in practice:

It doesn’t have to be a full reteach. Sometimes it’s two minutes at the start of the next class: “Most of you missed question 4. Here’s where the confusion usually is.”

That’s it. That two-minute correction, grounded in actual data, is more effective than re-teaching an entire concept from scratch.

6. Analyze Beyond the Numbers

Dashboard numbers tell you what happened. They don’t always tell you why.

A class average of 62% on a quiz tells you students struggled. It doesn’t tell you whether they misunderstood the concept, misread the question, ran out of time, or never engaged with the material to begin with.

That’s the gap between collecting data and actually understanding it.

Some teachers annotate results manually. That works, but it takes time most teachers don’t have.

Edcafe AI approaches this differently with its analytics assistant.

Instead of leaving teachers to interpret raw dashboards on their own, it lets you have a conversation with your quiz data.

Ask it which students struggled with a specific concept, what the most commonly missed question was, or where performance dropped off, and it responds using the actual submissions from your quizzes as its knowledge base.

It’s the difference between reading a report and asking the right questions of it.

7. Design Extra Support for Students Who Struggle

Quiz data tells you who needs more help.

What you do with that information determines whether struggling students catch up or fall further behind.

A meta-analysis of 32 experimental studies published in The Asia-Pacific Education Researcher found that scaffolded instructional support significantly promotes student problem-solving ability.

The takeaway: support has to be deliberate, not just simply available.

Scaffolding through quiz design:

How you build the quiz can support struggling students before they even get stuck.

- Break complex questions into steps — Instead of one multi-part question, sequence simpler questions that build toward the harder concept

- Use easier questions early — Low-difficulty items at the start build confidence and activate prior knowledge

- Include explanations with feedback — When students see why they got something wrong, not just that they did, they’re more likely to self-correct

Scaffolding through follow-up:

After the quiz, use the data to target support specifically.

- Group students by result — Students who struggled with the same concept can work together or receive the same targeted reteach

- Assign differentiated follow-up — Students who passed don’t need the same next step as students who didn’t. AI tools that let you create multiple versions of a quiz or assign different content based on performance make this easier to execute.

- Revisit, don’t just re-explain — A struggling student who hears the same explanation again often stays confused. Change the approach: different question type, different example, different framing.

Don’t wait for the end-of-unit test to find out who’s behind.

Frequent, low-stakes AI quizzes throughout a unit catch gaps early when there’s still time to address them.

One of the many good follow-ups for struggling students is an open-ended writing assignment. Check out how to create an auto-graded essay with AI.

Best Practices for AI-Generated Quizzes

Core practices tell you what to do. Best practices tell you how to do it well.

These aren’t rules. They’re habits that separate teachers who get useful output from AI from those who get generic questions they can’t use.

Start Small

Don’t build your entire assessment strategy around AI on day one.

AI quiz tools have a learning curve, not technically, but pedagogically. You’ll get better at prompting, better at spotting weak questions, and better at knowing which source materials work well.

That judgment builds with use, not with reading about it.

A good first quiz to try: a low-stakes, 5-question check-in at the end of a lesson you’ve taught before. You already know the material well, which makes it easier to evaluate whether the AI got it right.

Start where the stakes are low. Scale once you trust your own process.

Review Before Delivering

AI generates a draft. You approve what students see.

Every question in an AI-generated quiz should pass a quick check before you assign it:

- Does this test what I actually taught?

- Are the answer choices clear and distinct?

- Is the difficulty appropriate for this moment in the unit?

- Would I have written this question myself?

If the answer to any of those is no, edit it or cut it.

The risk of skipping review isn’t just bad questions. It’s students being assessed on content that was never taught, losing trust in the quiz, and generating data you can’t act on.

Use Prompts Strategically

The output quality of AI quiz tools depends heavily on what you put in.

Vague input produces vague questions. “Create a quiz on World War II” gives you something generic.

“Create 5 multiple-choice questions on the causes of World War I for 10th grade, focusing on the alliance system and the assassination of Archduke Franz Ferdinand, at an application level” gives you something usable.

What to specify when prompting:

- Topic and scope — Be narrow. One concept, not a whole unit.

- Grade level and difficulty — Don’t assume the AI knows your students.

- Question type — Multiple choice, short answer, true/false. Tell it.

- Cognitive level — Recall, application, analysis. This changes the kind of question generated.

- Number of questions — Fewer, focused questions beat long generic sets.

You don’t need to include all of these every time.

But the more specific you are, the less editing you’ll need to do after.

If you want better AI output, you must start with better prompt writing. And while you’re at it, do a quick scan on how to avoid AI hallucinations.

Balance AI with Teacher Control

AI handles the mechanical parts of quiz creation. You handle the judgment calls.

AI doesn’t know your students, your classroom culture, what you emphasized in a past lesson, or which misconceptions have been showing up all semester.

You do.

What AI should own:

- Generating a first draft fast

- Pulling questions from long source documents you don’t have time to parse

- Formatting, delivery, grading, and basic analytics

What you should own:

- Which questions stay and which get cut

- Whether the difficulty matches where students are right now

- How results connect to what you teach next

- Whether this quiz is appropriate for these students at this moment

The goal is to use AI for the parts that don’t require your expertise so you can focus on the parts that do.

What to Consider When Using AI for Quizzes

AI quiz tools make creation faster. But faster comes with responsibility.

Before you use any AI tool with your students, there are two things you should understand clearly: what happens to your students’ data, and where your professional judgment still needs to show up.

Privacy and Student Data

When you use an AI tool in your classroom, data is being collected.

The question is what kind, and what happens to it.

A 2024 policy brief from the Future of Privacy Forum on vetting generative AI tools for school use identifies the key distinction: whether a tool requires student personally identifiable information (PII) as input, or whether output from the tool becomes part of a student’s record.

When either is true, federal laws like FERPA and COPPA apply, and schools are responsible for ensuring vendor contracts reflect those obligations.

In practice, this means a quiz tool where students log in with school credentials, submit responses tied to their name, and receive grades is processing student PII.

That implicates different legal requirements than a tool you use privately to generate questions that you then deliver manually.

What to check before using any AI quiz tool with students:

- Does the tool collect student PII? — Names, emails, school IDs, and response data tied to identifiable students are PII.

- Does the tool use student data to train or improve their AI? — This is a red flag under most school privacy policies. Look for explicit clauses in the terms of service.

- Is there a data processing agreement (DPA) with your school or district? — Many districts require one before any edtech tool can be used with students.

- How long does the tool retain student data, and can it be deleted? — FERPA requires schools to have control over data deletion.

- Are students under 13? — COPPA imposes additional consent and data minimization requirements for tools used with children under 13.

A simpler rule of thumb: If you’re unsure about a tool’s data practices, don’t enter student names, identifiers, or real response data until you’ve verified compliance.

Many quiz generation tasks can be done with anonymized inputs.

Teacher Oversight

Using an AI tool doesn’t transfer your professional responsibility. It extends it.

Research published in Computers and Education: Artificial Intelligence found that large language models align with expert evaluation on explicit, surface-level features of educational content, but show lower reliability when assessing high-inference pedagogy and instructional coherence.

In plain terms: AI can produce a structurally correct quiz, yes. But it can’t judge whether that quiz is pedagogically sound for your students.

That gap is where your intervention matters most.

What AI can’t assess on its own:

- Whether the questions reflect how you actually taught the concept

- Whether the difficulty is right for where your students are in their learning

- Whether the framing of a question is culturally appropriate or unintentionally confusing

- Whether a “correct” answer in the source material is actually contested or context-dependent

None of this is a reason to avoid AI quiz tools. It’s a reason to stay in the loop.

Oversight is the point.

The teacher who reviews AI-generated questions before assigning them isn’t doing extra work. They’re doing the actual pedagogical work that AI can’t do.

AI Quiz Tools to Get Started With

Knowing what AI can do for quiz creation is useful. Knowing which tool to start with is where most teachers get stuck.

There are dozens of AI quiz tools available. They don’t all do the same thing. Choosing the wrong one for your workflow means extra work, not less.

What to Look For

The right tool depends on how you teach, what you need the quiz to do, and what systems you’re already using.

These are the criteria that actually matter:

| Criteria | Why It Matters | What to Check |

|---|---|---|

| Input sources | You should be able to build from what you already have, not retype content | Does it accept documents, URLs, videos, and typed topics? |

| Question types | Different assessment goals need different formats | Does it support multiple choice, short answer, true/false, and fill-in-the-blank? |

| Editing flexibility | AI generates a draft; you need to refine it | Can you edit questions, rewrite answer choices, and adjust difficulty after generation? |

| Delivery method | Friction in access hurts completion | Does it support shareable links, QR codes, or LMS integration? |

| Grading and analytics | Manual grading defeats the time-saving purpose | Does it auto-grade and show per-question and per-student data? |

| Student experience | Students should be able to take the quiz without creating accounts | Is it accessible without student login? Is it mobile-friendly? |

| Privacy compliance | Student data has legal protections | Does the tool have a DPA available? Does it use student data for model training? |

| Free tier limits | Most tools cap free usage. Know what you get before you commit. | How many quizzes or generations does the free plan include? |

No single tool will max out every row. The goal is finding the best fit for what you’ll actually use it for.

If you need auto-grading and analytics, a tool that only generates questions won’t cut it. If students need to take quizzes asynchronously on their phones, a desktop-only platform is a problem before you even start.

For a deeper breakdown of how these criteria separate strong tools from weak ones, see what makes a good AI quiz generator.

Teacher Top Picks

When teachers talk about which AI quiz tools they actually keep using, a few names come up consistently.

These tools show up across teacher communities, EdTech roundups, and educator reviews for good reason: they’re practical, accessible, and built with how teachers actually work in mind.

Here are the ones worth knowing:

- Edcafe AI — A dedicated AI teaching platform built for the full quiz workflow. Generates quizzes from topics, documents, URLs, and YouTube videos. Supports multiple question types, quiz modes, direct assigned, auto-grading, and real-time data & analytics. Free plan available; Pro from ~$8/month.

- Conker — Standards-aligned quiz generation with mixed question types and no-login student access. Simple interface, good for teachers who want fast, clean output without a lot of setup. Free plan (5 quizzes); Paid from ~$4/month.

- QuestionWell — Standards-aligned multiple-choice generator with LMS export. Solid for teachers who generate questions on their side and push them into Google Classroom or another platform they already use. Free plan available; Premium ~$7/month.

- Quizgecko — Generates quizzes from PDFs, slides, YouTube links, and handwritten notes in about 30 seconds. Comes with 15+ LMS integrations and a strong privacy posture. Free plan available; paid plans from ~$8/month.

- Formative — Built for real-time assessment rather than just quiz generation. Teachers can see student responses as they happen, give live feedback, and adjust instruction mid-lesson. Free plan available; school and district plans for advanced analytics.

For a fuller comparison with pricing, limitations, and use-case breakdowns, see the best free AI quiz makers for teachers plus a separate list of assessment tools.

Before You Go

I leave you with this: any AI quiz is only as good as the teacher behind it.

AI can generate a quiz in seconds.

What it can’t do is:

- decide whether those questions reflect what you actually taught,

- whether the difficulty is right for where your students are,

- or whether the results point to something you need to address before moving on.

That part is still yours.

This guide exists because speed isn’t the hard part.

Knowing what to do with the quiz before you assign it, while students are taking it, and after results come in; that’s where most teachers either get real value from AI or walk away frustrated.

At the end of the day, the teachers who get the most out of AI quiz tools are the ones who use them deliberately.

Start with one quiz. Pick content you already know well. Review before you assign. Look at what the data tells you. Adjust.

And that’s basically it. That’s the whole practice.

FAQs

What’s the difference between using ChatGPT and a dedicated AI quiz tool?

ChatGPT generates quiz questions when you prompt it, but it stops there — there’s no delivery system, no student interface, no auto-grading, and no analytics. You’d need to manually copy questions into another platform. Dedicated AI quiz tools generate questions and handle the entire workflow: sharing, student access, grading, and data. For one-off drafts, ChatGPT works. For actual classroom use, a dedicated tool is more practical.

How accurate are AI-generated quiz questions?

AI-generated questions are often structurally sound but not always pedagogically accurate. Common problems include distractor options that are too obvious, questions testing content that wasn’t taught, and factual errors in niche subject areas. Teacher review before assigning is essential.

Are AI quiz tools safe for student data?

It depends on the tool. When students submit responses tied to their names or school accounts, that data is considered personally identifiable information (PII) under FERPA and COPPA. Before using any AI quiz tool with students, check whether the vendor has a data processing agreement (DPA) available, whether student data is used to train their AI models, and how long data is retained. Tools like Quizgecko and Edcafe AI explicitly state that student data is not used for AI training.

Do I need technical skills to use AI quiz tools?

No. Most AI quiz tools are designed for teachers without technical backgrounds. You type a topic or upload a file, select your question preferences, and the tool generates a draft. The most advanced skill required is writing a clear prompt — which improves with practice but requires no coding or technical knowledge to start.

How long does it take to create a quiz with AI?

Generating a first draft takes between 5 and 30 seconds with most dedicated AI quiz tools. A full quiz creation workflow — from uploading source material to assigning to students — typically takes 5 to 15 minutes, compared to 30 to 60 minutes for writing a quiz manually. The time savings come primarily from generation; review and editing still require teacher judgment.

Can AI generate different types of quiz questions?

Yes. Most dedicated AI quiz generators support multiple choice, true/false, short answer, and fill-in-the-blank questions. Some also generate matching questions, open-ended responses, and multi-select options. You typically specify the question type before generating, or mix types within a single quiz. The quality of each type varies — multiple choice and true/false tend to be most reliable; short answer and open-ended questions may require more editing.