Student feedback works when it’s specific, timely, and pushes students to act on something.

But giving feedback in that level of quality takes hours you simply don’t have.

A recent meta-analysis, published on the Journal of Educational Computing Research (JECR), of 40 studies involving 5,849 participants found that AI-supported personalized feedback has significant positive effect on learning outcomes, and even stronger on learning motivation.

In simpler words: students who receive personalized feedback engage more and improve faster.

But the obvious challenge is scale.

Writing meaningful feedback for every student, on every assignment, is logistically unsustainable.

And cue: this is where you can use AI to your advantage.

So, yes. It is possible to personalize student feedback with AI. But you need the right approach.

This guide shows you how to use AI to personalize student feedback at scale, while keeping it specific, and authentically yours.

Where Personalization and AI Meet

Personalized student feedback is not breaking news.

Teachers have always known that “Good job” doesn’t help a student improve, but “Your thesis is clear, now add evidence from the text to support it” does.

The difference here is specificity.

Research from Frontiers in Education shows that high-quality feedback with self-regulation information has effect sizes around 0.99.

Meaning it’s one of the most powerful levers for student learning.

But that kind of feedback requires knowing what each student wrote, where they struggled, and what they need to do next.

AI changes what’s possible at scale.

It can read 30 student responses in seconds. It can identify patterns. It can generate feedback tailored to what each student actually wrote, not generic praise or vague suggestions.

But AI doesn’t replace your judgment. It speeds up the part that takes time (reading, drafting) so you can focus on the part that matters (making it meaningful).

What AI can do

AI reads student work and generates feedback based on what it sees:

- Identify strengths and gaps. AI can spot what a student got right, what they missed, and where their reasoning breaks down.

- Generate specific, actionable suggestions. Instead of “Try harder,” AI can say “Your introduction is strong, but your second paragraph needs evidence to support the claim.”

- Adapt tone and complexity. AI can adjust feedback language to match student reading level or confidence.

- Scale to every student. Whether you have 5 students or 150, AI generates feedback for each one in the same amount of time.

What you, as a teacher, add

AI gives you the draft. You add the context that makes it yours:

- Your teaching language. AI might say “Consider adding more detail.” You say “Remember how we talked about showing vs. telling? Try that here.”

- Connection to the lesson. AI doesn’t know you spent 20 minutes on thesis statements last week. You do. Reference that in your feedback.

- Encouragement that’s real. AI can generate praise, but it doesn’t know this is the first time a student turned in work on time, or that they’ve been struggling all semester. You do.

- Next steps that fit the student. AI suggests what to improve. You decide whether this student needs a challenge, more scaffolding, or a confidence boost.

The best feedback happens when AI handles the reading and drafting, and you handle the personalization that requires knowing your students.

Choosing the Right AI Tool for Personalized Student Feedback

You already know it: not all AI feedback tools are built the same.

Some generate generic comments that could apply to any student. Others let you guide the feedback so it reflects your teaching and your criteria.

The main consideration here should be control.

A recent study with 21 higher education teachers found that educators frequently accepted AI feedback suggestions but extensively revised outputs, often moderating praise, adjusting tone, and adding relationship-building statements.

Teachers valued AI for identifying missing feedback components but emphasized the need for human editing to make feedback meaningful.

That’s the pattern: AI speeds up the process, but teachers shape the result.

When choosing an AI tool for student feedback, look for these features:

- Accepts your grading criteria or rubric. The AI should generate feedback based on what you’re actually assessing, not generic writing quality.

- Works with different assignment types. Whether that be essays, short answers, problem sets, the tool should handle what you assign.

- Lets you edit before students see it. You should review and adjust feedback before it goes out, not after students have already read it.

- Supports multiple input sources. Students submit work in different formats: text, uploaded files, Google Docs. The tool should handle all of them.

Different tools serve different needs:

- For essays and long-form writing: Tools like Writable, EssayGrader, or CoGrader focus on structure, argument, and writing mechanics. See our roundup of AI essay grader tools for detailed comparisons.

- For quiz performance: Tools that auto-generate feedback based on correct answers and common misconceptions work well for formative checks.

- For real-time, on-the-spot feedback: AI chatbots that students can ask questions to while working, providing immediate support without waiting for teacher review.

- For general assignments: Tools that let you define custom grading instructions and upload rubrics give you more flexibility across assignment types.

The key is finding a tool that lets you set expectations up front, so the AI generates feedback aligned with your teaching.

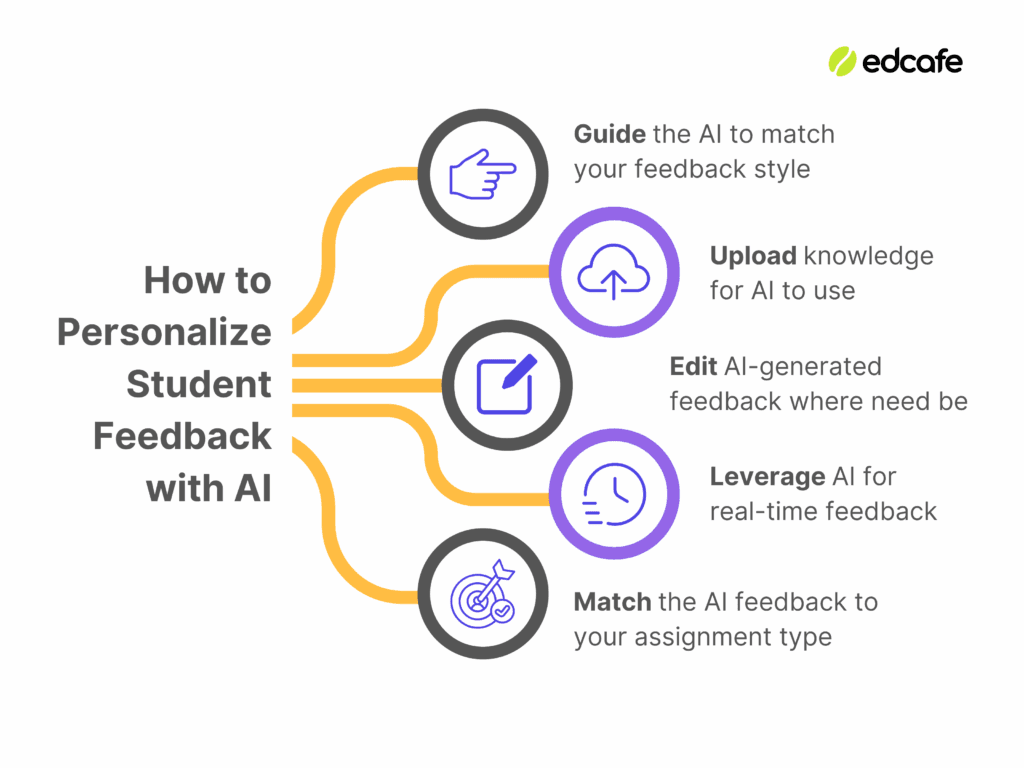

1. Guide the AI to Match Your Feedback Style

Language models like ChatGPT can draft feedback in seconds.

But here’s the problem: without guidance, that feedback sounds generic. It might be technically correct, but it doesn’t sound like you.

Student feedback must sound like you because when it does, students recognize it.

What “feedback style” actually means

Your feedback style includes:

- Tone. Are you encouraging and supportive? Direct and matter-of-fact? Conversational? Formal?

- Structure. Do you organize feedback by strengths and areas for improvement? By rubric categories? By priority?

- Focus. Do you emphasize content and ideas first, or mechanics and grammar? Do you highlight what’s working before what needs fixing?

- Language. Do you use phrases like “I noticed…” or “Try this next time”? Do you reference class discussions or specific strategies you’ve taught?

AI doesn’t know any of this unless you tell it.

How to set your feedback style in AI tools

Most AI student feedback tools have a field where you can provide instructions.

This is where you define your style.

For example, if you’re using a tool to grade essays, you might write:

- “Divide feedback into two sections: Strengths and Next Steps. Start with what the student did well, then suggest one or two specific improvements.”

- “Use an encouraging tone. Focus on content and argument first, then address grammar only if it interferes with clarity.”

- “Reference the thesis statement strategies we practiced in class when giving feedback on introductions.”

The more specific you are, the more the AI-generated feedback will sound like something you’d actually say.

A good bonus of tools like Edcafe AI is you don’t have to start from scratch building these prompts from absolute ground zero.

Take its Assignment Grader feature, for one, which is already pre-built and designed to scan student work and generate classroom-ready feedback including default sections such as:

- Summary – A two-sentence overview of the submission quality

- Strengths – Three specific aspects done well

- Areas for Improvement – Three specific areas to work on

- Next Steps – Actionable suggestions to help the student improve

To make it more you, a dedicated Grading Instructions field is available for you to get your tone, structure, and focus specified right away, simply supporting what classroom-ready feedback there already is.

2. Upload Knowledge for AI to Use

AI can only personalize feedback based on what it knows about your grading criteria.

Without a rubric or reference materials, the AI defaults to generic writing quality.

It can’t assess whether students met your specific assignment requirements because it doesn’t know what those requirements are.

The obvious fix here is to upload the files that define what you’re actually assessing.

What to upload

The most important file you can give the AI is your rubric.

A rubric tells the AI exactly what you’re looking for.

When the AI has your rubric, it evaluates student work against those specific categories instead of guessing what matters.

If you don’t have a formal rubric, you can upload:

- Assignment instructions or prompts – The document you gave students explaining the task and expectations

- Reference materials – Source texts, articles, or readings students were supposed to use in their work

- Example work or anchor papers – Models of what strong work looks like for this assignment

These files give the AI context about what students were asked to do and what success looks like.

How to upload rubrics and reference materials

Most AI grading tools let you upload documents directly.

For example, Edcafe AI’s Assignment Grader includes a dedicated rubric upload field where you can drop in a PDF, or any standard document format.

The AI then reads the rubric and uses it to evaluate student submissions against your specific criteria.

Some tools also let you import materials from your LMS or Google Drive, making it easier to pull in assignment prompts or reference documents you’ve already created.

3. Edit AI-Generated Feedback Where Need Be

AI-generated feedback is a starting point, but never the final product.

Even when you’ve set your feedback style and uploaded your rubric, the AI won’t always get it exactly right.

That’s why the best AI feedback tools let you review and edit the generated feedback.

Why editing matters

Recent research published on arXiv shows that AI-mediated feedback where teachers review and edit AI suggestions produces significantly higher-quality student revisions than either pure AI feedback or teacher feedback alone.

The same research found that teachers accept AI-generated feedback without modification about 80% of the time.

That means most AI feedback is already close, but the 20% you do edit makes the difference between generic and personalized.

The goal is to make small adjustments that turn AI-generated feedback into something that sounds like you and fits the student.

Common edits include:

- Adding student-specific context. “I can see you applied the strategy we practiced last week. Nice work.”

- Adjusting tone. Softening harsh phrasing or adding encouragement where the AI was too blunt.

- Clarifying vague suggestions. Replacing “Consider revising this section” with “Try adding a transition sentence here to connect your second and third paragraphs.”

- Fixing errors. Correcting misinterpretations or removing feedback that doesn’t apply.

These edits don’t take long, but they make the feedback feel personal instead of automated.

How to integrate your own feedback easily

A good AI feedback tool lets you integrate your own feedback easily, whether that means editing what the AI generated or adding your own comments alongside it.

For example, Edcafe AI’s Assignment Grader is designed so students get feedback on the spot when they submit their work.

But you can track all submissions in the dashboard, see the feedback each student received, and take over the AI-generated feedback at any time.

When you edit it, your changes reflect in real time back to the student’s side, so they see your updated version immediately.

Another option is doing the grading yourself by uploading student submissions in bulk. You can upload:

- Multiple files at once – Drop in a batch of student submissions (PDFs, Word docs, etc.)

- Scanned images – Upload photos or scans of handwritten work

- Audio files – Upload student audio recordings with automatic transcription

The AI generates student feedback for all submissions, and you can review and edit each one from the submissions dashboard.

When working with quizzes, Edcafe AI also generates contextual explanations for each question, walking students through the correct answer.

Before assigning the quiz to students, you can edit these explanations to ensure that once students finish their quizzes, they get the explanations you want them to see.

4. Leverage AI for Real-Time Feedback

Not all feedback needs to wait until you’ve graded the assignment.

Real-time feedback keeps students engaged and helps them learn while the task is still fresh in their mind.

This is one reason AI tutors have become so popular because they provide instant, personalized support when students need it most, offering real-time guidance even beyond classroom hours.

When real-time feedback works best

Real-time AI feedback is most effective for:

- Formative checks. Short-answer quiz questions, exit tickets, or comprehension checks where students need to know if they’re on the right track.

- Practice problems. Math, coding, or problem-solving tasks where students benefit from immediate correction or hints.

- On-the-spot questions. When students are stuck and need help right away, not hours later when they’ve already moved on.

Real-time feedback supplements your grading by giving students immediate guidance so they can keep working instead of waiting for you to review their submission.

How to set up real-time feedback

The most common way to deliver real-time feedback is through building student-facing AI chatbots.

These are different from chatbots you use as a teacher. Student-facing chatbots are ones you build and customize specifically for your students to interact with, designed to support their learning, not yours.

When setting up a student-facing chatbot for real-time feedback, you control:

- Interaction guidelines – Set how the chatbot responds. Should it ask guiding questions instead of giving direct answers? Should it provide hints or step-by-step explanations? You define the behavior.

- Knowledge base – Upload your lesson materials, class notes, or reference documents so the chatbot answers based on what you’ve taught, not generic internet information.

- Student capabilities – Decide what students can do in the chatbot. Can they upload files for help? Draw on a whiteboard to explain their thinking? Talk to it using voice input?

For example, Edcafe AI’s Chatbot lets you build a student-facing AI tutor from scratch.

You set interaction guidelines (e.g., “Ask guiding questions instead of giving direct answers”), upload a knowledge base (like lesson notes or readings), and configure what students can do: whether that’s uploading files, using voice input, or getting instant feedback on their questions.

The chatbot becomes a 24/7 learning assistant that aligns with your teaching, not a generic help tool.

5. Match the AI Feedback to Your Assignment Type

It’s easy to conclude that all student feedback needs is personalization.

But another layer to it is matching it with assignment types.

The best AI feedback tools let you adjust how feedback is delivered based on what you’re assigning.

Different assignment types, different feedback approaches

Here’s how feedback delivery should change depending on the assignment:

| Assignment Type | When Feedback Is Delivered | What Feedback Should Include |

|---|---|---|

| Formative quizzes & practice | Instantly after each question | Explanations walking through the correct answer, images or voice notes for clarity |

| Summative assessments | After completing the entire assessment | Explanations for all questions, but only shown after submission to prevent answer adjustment |

| Essays & open-ended work | After teacher review and editing | Comprehensive, structured feedback covering strengths, areas for improvement, and next steps |

| Real-time support | Immediately when students ask | Conversational guidance with hints and guiding questions, not direct answers |

How AI adapts feedback to assignment types

Edcafe AI gives you control over how feedback is delivered depending on what you’re assigning:

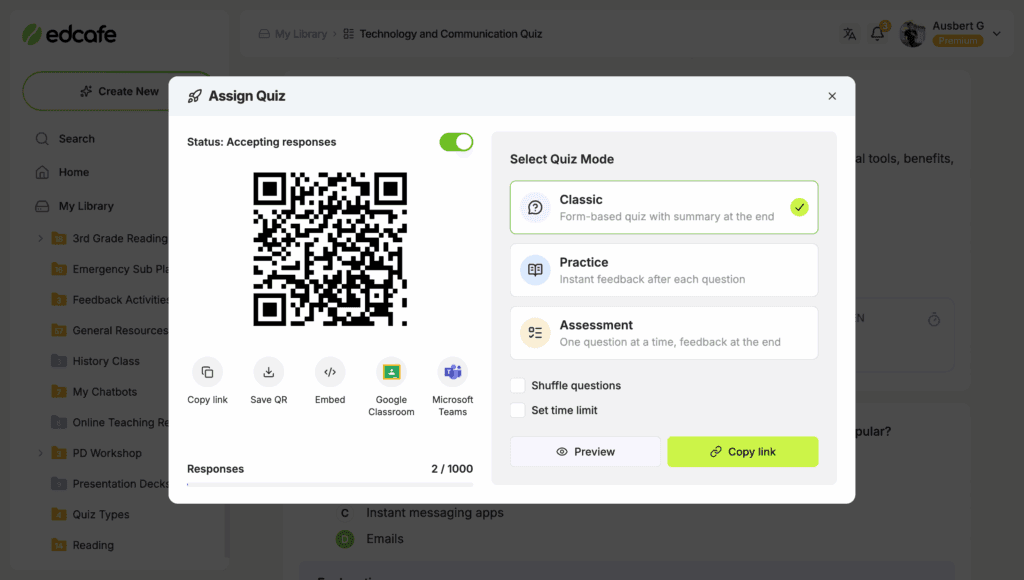

For quizzes, you can choose from three modes:

- Practice Mode – Students get instant feedback after each question, perfect for formative checks and learning as they go

- Assessment Mode – Students complete the full quiz first, one question at a time, then receive feedback all at once, ideal for summative assessments

- Classic Mode – Students see the entire quiz at once and get feedback only after submitting all responses

For essays and assignments, the Assignment Grader generates structured feedback (summary, strengths, areas for improvement, next steps) for students as they submit written work against the grading instructions and rubric you’ve set.

For real-time support, the Chatbot provides instant, conversational feedback based on the knowledge base and interaction guidelines you’ve built. Students get help when they need it, grounded in your teaching.

Common Mistakes to Avoid

Here are the tactical mistakes teachers make when personalizing feedback with AI, and how to avoid them:

- Copying and pasting the same grading instructions for every assignment. Your feedback style might stay consistent, but the focus changes. A persuasive essay needs different emphasis than a research paper. Update your instructions to match what you’re actually assessing.

- Not telling students how AI is being used. Students see feedback and don’t know if it came from you or AI. Be transparent, let them know AI generated the first draft, but you reviewed and personalized it. It builds trust and sets expectations.

- Editing only the first few submissions, then auto-accepting the rest. You review the first 3-4 pieces of feedback carefully, see they look good, then stop checking. But AI might handle different student work differently. Spot-check throughout, not just at the start.

- Forgetting to reference what you’ve taught. AI doesn’t know you modeled a specific revision strategy. When you edit feedback, connect it to your classroom: “Remember the strategy we practiced on Tuesday? Try that here.”

- Assuming AI will catch plagiarism or AI-generated work. AI feedback tools aren’t detectors. They evaluate based on your rubric, not whether the work is original. If academic integrity is a concern, address it separately, and don’t rely on AI feedback to flag it.

- Skipping analytics. You generate feedback for 30 students but never look at the patterns. Analytics show you which students need follow-up or which concepts the whole class missed. Use relevant data to personalize your next steps, not just to grade faster.

Before You Go

The best feedback you’ve ever given a student probably wasn’t the longest or the most detailed.

It was the one that showed you were paying attention. The comment that referenced something they said in class last week. The suggestion that built on their strengths instead of just listing what was wrong. The encouragement that landed because it was specific to them.

That’s what students remember.

Because at the end of the day, what students need is feedback that feels like it came from someone who knows them and wants them to succeed.

AI can help you deliver that. But only if you don’t let it replace you.

Start with tools you’re already familiar with. Language models like ChatGPT can help you draft feedback and see what’s possible.

If you want something built specifically for teachers, Edcafe AI is a good litmus test.

It’s designed for the full teaching cycle, so what you learn about personalizing feedback here will likely carry over to how you plan lessons, create assessments, and support students in real time.

FAQs

Is it hypocritical to use AI for grading if I don’t let students use it?

No. You’re using AI as a tool to support your professional work. Students are using AI to complete learning tasks meant to build their skills. The difference is purpose: you’re using AI to enhance your teaching, not to bypass the learning process. Be transparent with students about how you use AI, and explain that your role (evaluating and personalizing feedback) is different from theirs (demonstrating understanding).

How do I know if AI feedback matches my rubric and tone?

Review the first 5-10 pieces of AI-generated feedback carefully. Check if it addresses your rubric criteria, uses language you’d actually say, and avoids generic praise. If it’s off, adjust your grading instructions or rubric clarity. Spot-check throughout, and don’t just review the first few and assume the rest are fine.

Does using AI for feedback actually save time?

Yes, but not always immediately. The time savings come from AI handling the reading and drafting. You still spend time reviewing and editing, but you’re starting from 80% done instead of 0%.

What’s the best AI tool for personalizing student feedback?

It depends on what you’re grading. For essays and open-ended assignments, tools like Edcafe AI’s Assignment Grader let you upload rubrics, set grading instructions, and edit feedback before students see it. For quizzes, look for tools that generate contextual explanations you can customize. For real-time support, student-facing chatbots with constrained behavior work well. Start with one tool for your biggest pain point, test it for two weeks, and expand from there.

How do I explain to students that I’m using AI for feedback?

Be direct. Tell them: “I use AI to generate the first draft of feedback based on our rubric, then I review and personalize it before you see it. This lets me give you more detailed feedback, faster.” Most students appreciate transparency and understand that AI helps you give them better support. Avoid hiding it. If they find out later, it damages trust.

Can AI feedback introduce bias or unfairness?

Yes, if you’re not careful. AI can reflect biases in training data or misinterpret student work. That’s why human review is essential. Always read AI-generated feedback before students see it, and watch for patterns: does it consistently praise certain writing styles over others? Does it misunderstand cultural references or non-standard English? Your review is the safeguard against bias.