AI is changing how we work, learn, and create. A study by Microsoft found that by 2025, about one in six people globally regularly use generative AI tools. In education, responsible use of AI is one of the most urgent topics for teachers, admins, and policymakers.

86% of students globally now use AI in their studies and more than half use it weekly. Schools are among the heaviest users of generative AI across industries and yet, trust is low.

Accenture research shows only 35% of people trust how organizations use AI, and 77% say organizations must be held accountable for misuse. That gap matters.

This guide covers what responsible AI means for you, the risks to watch for, and practical steps to keep AI supporting learning without sacrificing fairness, privacy, or integrity.

Feeling overwhelmed? Start with the definition below, then skip to the 8 Practical Steps section. You can come back to the principles and risks later.

What Does Responsible Use of AI Mean?

Responsible use of AI means using AI in ways that match human values, legal rules, and ethical principles. UNESCO puts it simply: responsible AI protects human rights and dignity while promoting transparency, fairness, and human oversight.

It’s not just about checking boxes. It’s about building trust, reducing harm, and making sure everyone benefits. That means being clear about how AI works, who it affects, and how humans stay in charge of decisions.

In schools, everyone has a role. Admins, teachers, developers, and students all help shape how AI supports learning.

Ethical vs. Responsible AI: What’s the Difference?

People often use “ethical AI” and “responsible AI” interchangeably. They’re related, but different.

Ethical AI is about big ideas: fairness, privacy, how AI affects society. Responsible AI is more practical. It deals with accountability, transparency, following rules, and how AI is used day to day.

In your classroom, you’ll use both. Responsible AI shows up in three ways: setting policies, getting consent, and ensuring FERPA compliance.

What’s FERPA? The Family Educational Rights and Privacy Act, the federal law that protects student education records.

Ethical AI shows up when you talk about bias, equity, and how AI affects different learners. Our AI literacy guide walks through both so you and your students can use AI thoughtfully.

The Risks of AI in Education

Before you can use AI responsibly, you need to know what can go wrong. Here are the five main risks and what they mean for your classroom.

AI brings real promise. It can speed up research, personalize learning, automate routine tasks, and expand access to information. But it also carries important risks:

| Risk | Description |

|---|---|

| Academic Integrity and Misuse | Students may use AI irresponsibly to generate work presented as their own. Concerns over cheating and improper AI usage have become a flashpoint in education policy discussions. |

| Bias and Fairness | AI systems learn from data that can reflect societal biases. Without careful evaluation, they might generate outputs that perpetuate unfair treatment or reinforce stereotypes, potentially disadvantaging some learners. |

| Data Privacy and Security | Large AI models often rely on sensitive data. When those datasets include student or academic information, institutions must ensure data privacy protections, clear consent practices, and secure storage. |

| Hallucinations and Misinformation | AI models sometimes produce inaccurate or invented information, known as AI hallucinations. This can mislead learners or weaken the credibility of academic output if left unchecked. |

| Uneven Adoption and Equity | Unequal access to AI training and tools can widen educational disparities, leaving some students behind in digital skills and responsible use practices. |

Why Bias in AI Matters: A Real-World Example

Bias in AI is not theoretical. Consider the COMPAS algorithm, used by some courts to predict recidivism. ProPublica’s analysis found that when the algorithm was wrong, it was wrong in different ways by race.

Black defendants who would not be rearrested were labeled “high risk” at twice the rate of white defendants in the same situation. The algorithm didn’t account for discriminatory policing practices, leading to unfair outcomes.

In education, similar risks exist. AI used for grading, placement, or feedback can perpetuate bias if training data or design choices are not carefully reviewed. That’s why regular audits and human oversight matter.

When vetting AI tools for grading or placement, ask vendors how they test for bias across demographic groups. Review sample outputs with your team before rolling out to students, and look for patterns that might disadvantage certain learners.

Understanding AI Hallucinations

AI hallucinations occur when models produce plausible-sounding but false information. They can invent statistics, fake citations, or confidently state incorrect facts.

Our guide on how to avoid AI hallucinations walks through four red flags to watch for and how to verify outputs before using them in class. Teaching students to spot and question suspiciously perfect details or vague sources is part of building AI literacy.

Key Ethics and Principles for Responsible AI

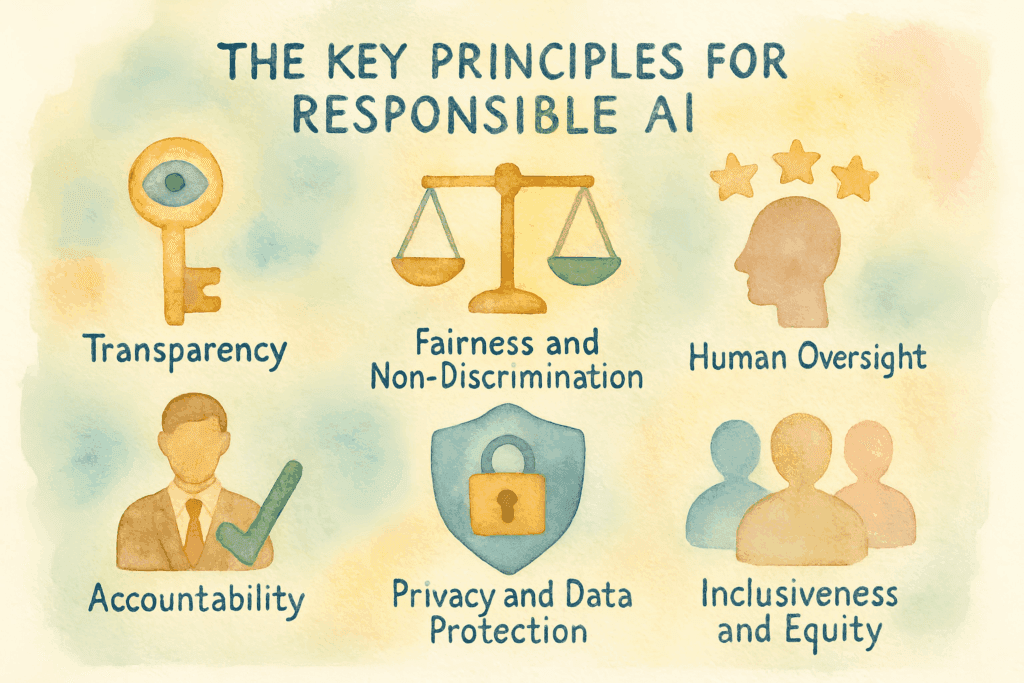

Now that you know the risks, here are the six principles that guide responsible AI use. These come from UNESCO, OECD, and leading institutions worldwide.

Guidelines from UNESCO, OECD, and leading educational institutions converge around shared principles that support responsible use of AI:

- Transparency. People using AI should understand how it works, its limitations, and what data it uses. Transparency builds trust and accountability.

- Fairness and Non-Discrimination. AI systems must be evaluated to prevent bias and ensure equitable outcomes across diverse learners.

- Accountability. Clear ownership of impacts and outcomes ensures that when harm occurs, someone can act to correct it. Accountability mechanisms are essential from developers to educators.

- Privacy and Data Protection. Responsible AI implementation requires strict adherence to privacy standards and ethical data handling.

- Human Oversight. AI should augment, not replace, human judgment. Human control prevents over-reliance on automated output.

- Inclusiveness and Equity. AI in education must support equal opportunities, with special focus on underserved communities and learner needs.

These principles form the backbone of global frameworks adopted by 194 UNESCO member states. They’re reflected in institutional guidelines, such as Stanford and CSU.

Who’s Responsible? Governance in Schools

Principles are great, but someone has to enforce them. Here’s how to set up clear ownership in your school.

Someone has to own the decisions and the consequences. IBM warned in 1979 that “a computer can never be held accountable, therefore a computer must never make a management decision.’” Decades later, that principle is more urgent than ever.

AI can’t be held accountable but people can.

Schools need to decide who is in charge of:

- Policy and compliance (like AI usage policies and FERPA alignment)

- Tool selection and vetting (which AI platforms are approved)

- Ongoing review (quarterly audits of AI outputs)

- Student and parent communication (explaining how AI is used)

Quick win: Put one person in charge, your tech coordinator or an assistant principal. The important part: someone enforces the policies and updates them as AI changes. A policy from six months ago might already be outdated.

Responsible Use of AI in the Classroom: 8 Practical Steps

You’ve seen the risks, principles, and governance structure. Now here’s what you can do in your classroom, starting today. Pick one or two to begin with.

1. Teach AI Literacy

Help your students understand how AI works. Its strengths, its limits, and the ethical questions it raises. That’s AI literacy. When students get it, they use AI thoughtfully instead of blindly.

The UK and California State University both have programs you can borrow from.

CSU’s student guidelines on ethical vs. unethical AI use (writing help, research, and more) adapt well to K–12.

Try this: Add AI literacy into your curriculum. Short lessons or quizzes work.

Ask students to spot AI in their daily lives (Netflix recommendations, Spotify, and the like). Stanford’s AI Teaching Guide has activities and discussion prompts.

2. Establish Clear Policies

Schools that adopt AI should write down clear expectations around acceptable use, documentation, and consequences for misuse. Formal policies reduce confusion and support fairness.

Try this: Create a clear AI usage policy that outlines which tools are allowed (for example, AI for research and brainstorming, not for submitting work as original).

Have students and parents sign an agreement at the start of each term. ISTE and CoSN offer resources for K–12 AI policy development.

3. Teach Students How to Cite AI

When students use AI to draft, brainstorm, or revise, they must cite it. Transparency matters.

Provide clear citation guidance and model it in your own work. APA and MLA both have formats for ChatGPT and similar tools.

Try this: Share a simple citation template with students: “This [essay/section] was drafted with assistance from [AI tool name] and revised for originality.”

For formal citations, follow APA or MLA guidelines.

Discuss what counts as “significant” AI use vs. light editing.

4. Give Students a Decision Framework

Students face gray areas: Is using AI to brainstorm cheating? What about to check grammar? The CSU AI Commons mentioned earlier suggests questions your students can ask themselves:

- Am I using AI as a tool to learn and create, or as a shortcut to avoid effort?

- Am I adding my own critical thinking, creativity, and effort to AI outputs?

- Would I be comfortable explaining how I used AI to my teacher or peers?

- Does this use align with my school’s academic integrity policies?

Pro tip: Have students answer these in writing before a major assignment. It creates a record of their intent and sparks better reflection than a verbal check-in. You can adapt the questions for any grade level.

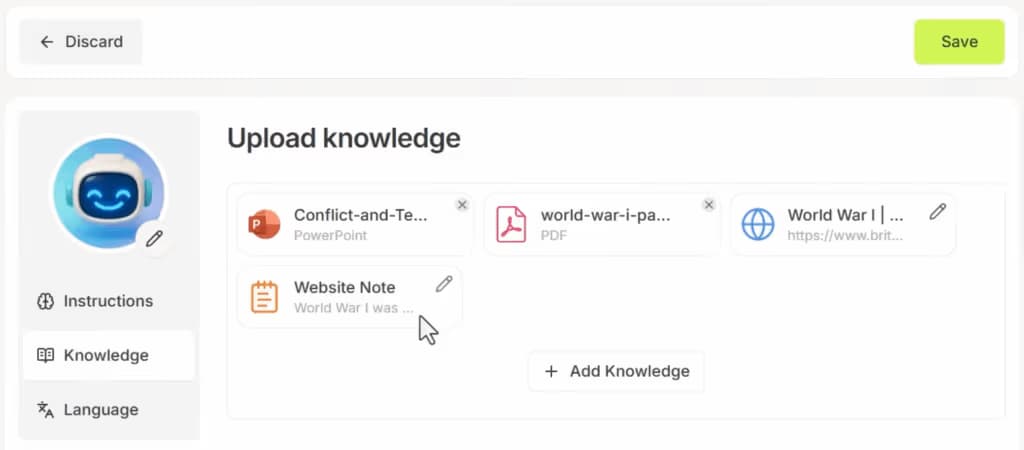

If you use AI chatbots in class, choose ones with educational guardrails. Edcafe AI’s chatbots let you control what the AI can access and how it responds.

You can restrict the chatbot to only use your uploaded materials (textbooks, slides, notes) and customize its instructions to guide students through problems step-by-step rather than giving direct answers. This keeps students engaged with the learning process.

5. Embed AI With Human Oversight

AI should assist teachers, not replace them. Maintain strong human oversight over grading, assessment design, and academic decision-making.

A good workflow is where AI handles repetitive tasks (like grading multiple-choice questions) while you focus on open-ended assignments that need human judgment.

Edcafe AI’s Quiz and Assignment Grader follow this workflow. The AI generates initial feedback on student work in seconds. You open the submission, read the AI’s assessment, adjust the tone or add specific examples, then release it to students. The time you save on routine grading goes toward deeper feedback on complex assignments.

Try this: Set a rule for yourself: never share AI-generated content with students without reviewing it first.

Check for accuracy, alignment with your lesson objectives, and appropriateness for your class. Our guide on human-AI collaboration in education has more on this.

6. Protect Privacy

Protecting privacy is crucial. Schools must ensure AI tools secure student data and obtain consent.

FERPA requires that schools protect student education records and obtain consent before sharing data with third parties.

When evaluating AI tools, ask vendors about their privacy practices. Look for platforms that encrypt data in transit, don’t train AI models on student work, and let you delete student data when needed.

Try this: Have students and parents sign consent forms for any tool that collects or processes their data.

Choose AI platforms that provide data encryption and clear privacy practices. Review tools against your district’s data classification (what counts as “moderate” or “high” risk).

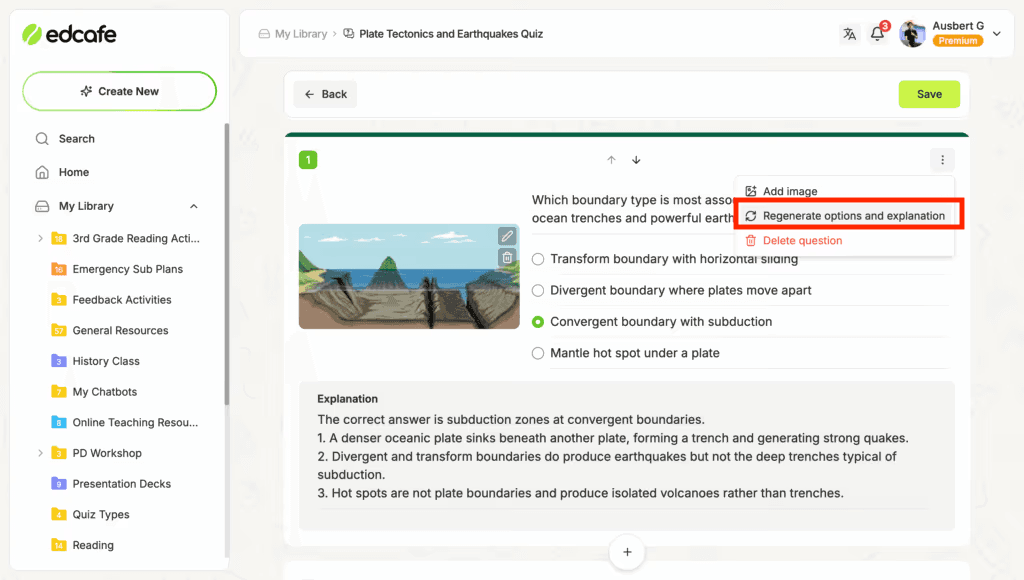

7. Regularly Audit AI Outputs

Bias audits and systematic reviews of AI results help identify issues before they affect learners. Research from Demos Helsinki on ethical AI shows that AI systems can produce biased or unfair results if not carefully reviewed.

Tools that let you review outputs before sharing make auditing easier.

Edcafe AI shows you every AI generated quiz question and answer explanation before students see them. If a question feels off or an explanation misses the mark, you can edit it directly or regenerate it with one click. This makes regular audits part of your normal workflow instead of a separate task.

Try this: Set up a quarterly audit process where teachers or tech staff review AI outputs for biases.

Encourage peer reviews and student feedback on AI tools to identify potential issues.

8. Communicate With Parents

Parents may have questions or concerns about AI in the classroom, but they are more likely to embrace AI when you’re transparent from the start.

Proactively explain how AI is used, what data is collected, and how you are protecting student privacy and academic integrity. A short letter or FAQ at the start of the year can build trust and reduce anxiety.

Moving Forward: Your Next Steps

Responsible use of AI means aligning AI with human values, legal frameworks, and ethical principles while building trust and minimizing harm.

You don’t have to do all of this at once. Pick one step and start there.

New to AI in your classroom? Start with Step 1 (Teach AI Literacy) and Step 2 (Establish Clear Policies). These two create the foundation for everything else.

If you already use AI: Focus on Step 4 (Give Students a Decision Framework) and Step 5 (Embed Human Oversight). These help students use AI responsibly and keep you in control.

If you’re a tech coordinator or admin: Start with Governance. Designate who owns AI policy, tool selection, and audits. Update your policies at least once per semester.

The goal is not perfection. It’s thoughtful, intentional use that supports learning. Start with one step this week.

FAQs

What role does teacher oversight play in the responsible use of AI?

A big one, teachers need to review AI-generated content, give personalized feedback, and make sure tools are used ethically and effectively. Human judgment catches what AI misses: bias, factual errors, and misalignment with your learning goals.

Can AI tools be used responsibly without clear guidelines?

No. Responsible use of AI requires clear guidelines and policies. Without them, the risk of misuse (such as AI-generated cheating or biased grading) increases significantly. Policies should be updated regularly as AI evolves.

How can educators teach students about the responsible use of AI?

Integrate AI literacy into the curriculum. Discuss ethics, transparency, and data privacy. Use decision frameworks (like “Am I using AI to learn or to avoid effort?”) and citation guidance. Resources like the CSU AI Commons and Stanford AI Teaching Guide offer activities and prompts.

Can AI tools help prevent academic dishonesty?

Yes, when used responsibly. AI can support academic honesty by providing structured feedback, encouraging revision, and helping students learn to cite and verify sources. The key is teaching students when and how to use AI, and when not to.